Published

Read Time:

About Intellistack

Intellistack (formerly Formstack) is a private equity-backed workflow automation company founded in 2006 that serves over 32,000 organizations worldwide, including Kaiser Permanente, Shell, Shopify, and Netflix. Backed by PSG Equity and Silversmith Capital Partners, the company provides no-code solutions for form building, document generation, eSignature collection, and workflow automation. In June 2025, the company rebranded from Formstack to Intellistack and launched Intellistack Streamline, a market-first no-code platform for AI-native process automation.

Intellistack employs over 200 people with approximately 80 engineers organized across backend, frontend, infrastructure, and solutions teams. The technology and engineering teams prioritized adopting AI tools to drive scale, efficiency, and speed across the software development lifecycle (SDLC), enabling them to successfully launch their AI-native, no-code workflow platform and rapidly mature it.

The Challenge

When spreadsheets become the bottleneck to proving AI value

AI adoption across the company became a critical business initiative at Intellistack, with all departments focused on AI education and day-to-day usage. On Intellistack’s technology team, engineers were using Cursor and Claude Code extensively. Engineering leaders were running internal training sessions on best practices, rolling out LangSmith and evals frameworks, and coaching teams on advanced prompting techniques. The work felt transformative, especially critical as Intellistack prepared to launch its own AI-native platform, Intellistack Streamline.

When Intellistack CTO Dave Cole needed to tell the story of AI adoption to private equity backers or the board, he faced a frustrating reality: proving the impact required weeks of manual work pulling data from multiple dashboards into Excel spreadsheets.

"I still had to use a spreadsheet for my analysis," Dave explains. "I wanted to look at Slack data, Jira tickets, and GitHub activity. I was exporting usage data directly from the Claude Code Dashboard and from Cursor's Dashboard, then manually categorizing engineers based on their usage levels."

The process was tedious, and the insights were limited. Dave and his engineering leadership could see who was using AI tools, but they couldn't answer the questions that really mattered: Was AI adoption actually improving output? Which teams were seeing the biggest gains? Were the frontend and backend teams benefiting equally? And most critically for PE backers: what was the actual ROI on the tens of thousands of dollars being spent monthly on AI tooling?

The company had previously used Code Climate for engineering analytics, but the legacy platform focused primarily on code quality metrics without any visibility into AI usage. For a company in the middle of a major rebrand and a product launch into AI-native workflows, having concrete data to justify and optimize internal AI investments was essential.

The Solution

Automated measurement that replaces manual busywork

Intellistack chose Weave for a simple reason: it solved both problems at once. The platform automatically tracked code output across all engineers while simultaneously measuring AI usage from Cursor, Claude Code, and GitHub Copilot without requiring any manual data exports, spreadsheet manipulation, or engineering overhead.

After connecting GitHub, Cursor, and Claude Code integrations, the platform immediately began providing historical insights going back 6 months. No tagging, no surveys, no overhead for the engineering team.

Weave's code output algorithm gave Intellistack a way to measure engineering productivity that accounted for complexity, not just volume. Unlike raw metrics like lines of code or PR counts, the algorithm used AI to understand what each pull request actually accomplished, making it possible to compare a simple dependency update to a complex feature built on equal footing.

Intellistack integrated Weave's data into several key workflows:

Executive Reporting: Dave used the platform to create comprehensive analyses combining Weave metrics with Slack activity, Jira data, and GitHub stats, running everything through AI to identify engagement patterns and surface potential issues—eliminating the hours of monthly manual work previously required before board meetings.

Strategic AI Adoption: The team began using Weave's component-level breakdown to understand where AI was having the most impact. They could see that backend teams were seeing dramatic productivity gains, while frontend teams were lagging behind, surfacing specific areas for improvement in their AI adoption strategy.

Training Effectiveness: Engineering leadership used the platform to understand not just who was using AI, but how they were using it. After running training sessions on advanced Cursor techniques like proper use of "plan mode" and automated test writing, they could track whether those practices were actually being adopted and correlated with output improvements.

Day-to-Day Management: Engineering Director Chris Webber incorporated Weave data into team conversations, using it as an invitation for engineers to get curious about their own productivity patterns and AI usage, identifying where individuals needed more training versus more encouragement for adoption.

The Results

Upper percentile performance and board-ready reporting

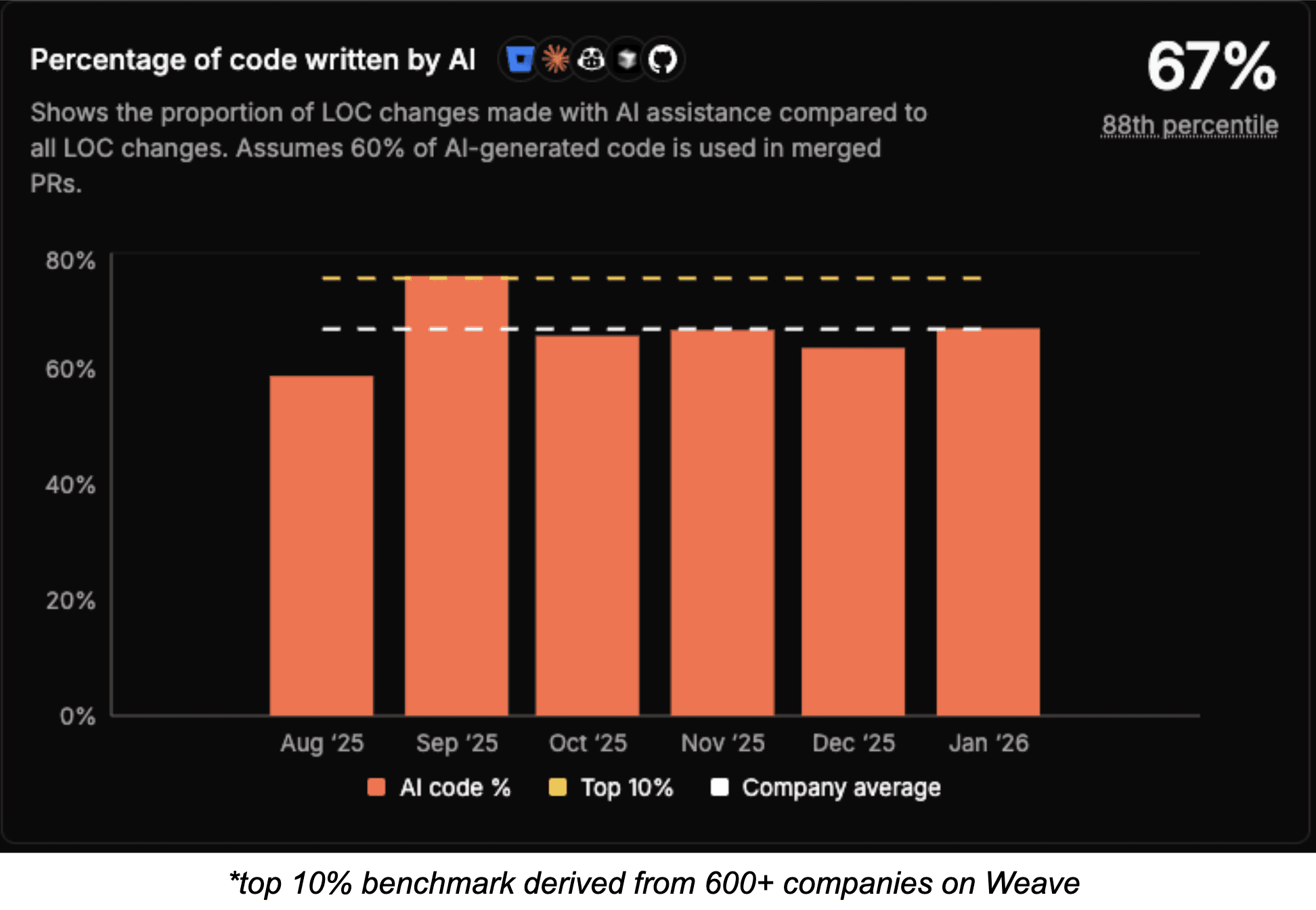

Intellistack now consistently ranks in the upper percentile for AI usage compared to industry benchmarks. Over the seven months, they actively pushed AI adoption with Weave's data as their guide, output increased dramatically, providing both the technical foundation and the credibility to launch an AI-native platform to their own customers.

The component-level insights revealed opportunities the team hadn't anticipated. Backend teams were excelling with AI adoption, but frontend teams showed room for improvement, revealing specific areas where additional training and support could drive even greater gains.

The weekly email digest became one of the few automated emails the team actually reads, surfacing "silent hero work behind the scenes" that might otherwise go unnoticed and providing visibility into contributions across the engineering organization.

The platform also helped surface issues before they became problems. In one case, Weave data showed concerning activity patterns that turned out to be engineers using personal accounts instead of company accounts for AI tools, immediately flagging a security issue that could have gone unnoticed for months.

Most importantly, Weave eliminated the manual reporting burden that had been consuming hours every month. No more exporting CSV files from multiple dashboards, no more Excel formulas, no more scrambling before board meetings. The data was always current, always accessible, and always accurate.

"We use it as a way to help drive adoption; it’s an invitation to become curious, you can determine if some people need more training or encouragement for greater AI adoption." — Chris Weber, Engineering Director, Intellistack

Published

The engineering intelligence platform for the AI era.

Trusted by engineering teams from seed stage to Fortune 500